After a research run completes on the worker, the control plane on the VM can generate or rewrite structured paper artifacts when paper rows and evidence context are available. This process — optional evidence sync, artifact writing, packaging/provenance scanning, and paper review — is the review stage of the Enoch research loop. The output is a Markdown and LaTeX draft that should be grounded in run notes, metrics, evidence bundles, and claim ledgers collected during the experiment.Documentation Index

Fetch the complete documentation index at: https://solo-09d10f60.mintlify.app/llms.txt

Use this file to discover all available pages before exploring further.

1. Confirm evidence exists

A complete corpus artifact usually includespaper.md, metadata.json, evidence_bundle.json, claim_ledger.json, and paper_manifest.json. Do not treat a draft as publishable if evidence or claim-ledger files are missing without an explicit explanation.

Before the artifact writer runs, the control plane should have access to the evidence the worker produced. If paper_evidence_sync_enabled is true, evidence sync runs when you trigger a draft rewrite; you can monitor its outcome in the rewrite response.

When evidence sync is enabled, the control plane attempts an HTTP sync first, reading evidence files directly from the worker’s wake gate API. If the worker returns the evidence, it is written into the local artifact root under the project directory. If the HTTP sync cannot retrieve the required high-signal evidence files, the control plane falls back to SSH, streaming a bounded tarball of evidence artifacts from the worker’s project directory.

Evidence paths the control plane looks for include:

results/ (e.g. hot_cold_sim_results.json, smoke.json) rather than the entire directory. The SSH fallback syncs the entire papers/ directory plus specific result files.

If paper_evidence_sync_enabled is true in your config and no local evidence is found after sync, the rewrite request returns HTTP 424 with an evidence_sync detail object explaining what was tried.

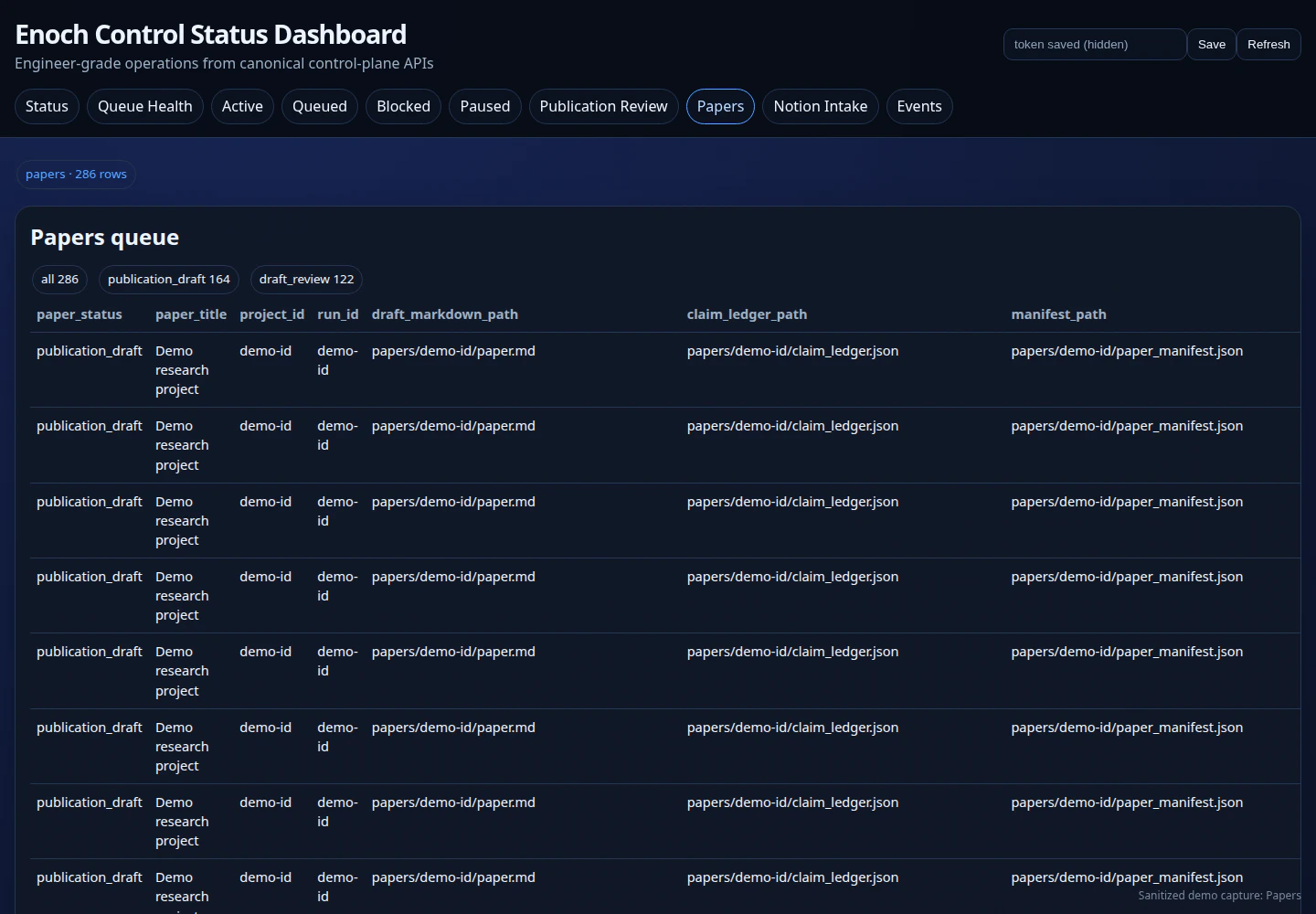

2. Generate Markdown and LaTeX

Once sufficient local evidence is present, the artifact writer runs against the evidence bundle and claim ledger to produce:papers/<run_id>/paper.md— Markdown draftpapers/<run_id>/paper.tex— LaTeX draftpapers/<run_id>/evidence_bundle.json— Evidence bundlepapers/<run_id>/claim_ledger.json— Claim ledgerpapers/<run_id>/paper_manifest.json— Artifact manifest

paper_writer_* settings. To test Synthetic.new with GLM-5.1, use:

paper_writer_fallback_enabled is true, the writer falls back to a deterministic MVP draft if the configured provider is unavailable or returns an error.

3. Scan packaging/provenance checks

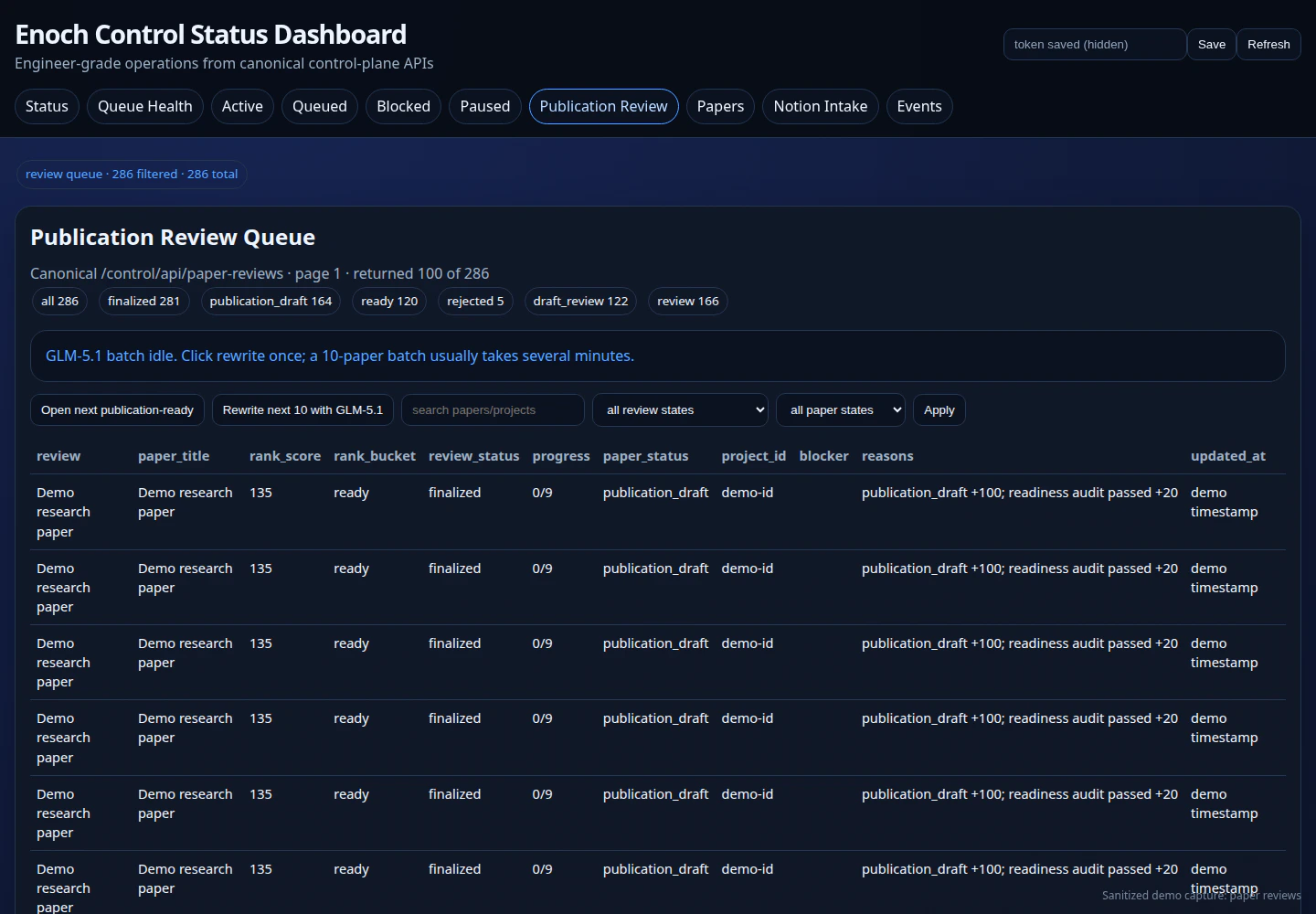

After the artifact is written, a review record is created in the paper review queue with areview_status of unreviewed or triage_ready. The packaging/provenance check includes a ranked checklist of items the reviewer must pass, fail, or accept-as-risk before the paper can be approved for finalization. It does not validate peer review, scientific correctness, or independent replication.

enoch-paper-draft-next.timer can automate this path after you have tested it.

4. Review generated drafts

Paper review APIs support claiming review items, updating checklist items, changing review status, rewriting drafts, approving finalization, and preparing finalization packages. The dashboard exposes the same concepts for operators, but generated prose still needs human review.

5. Rewrite with an optional provider

If configured,synthetic.new can rewrite draft content through an OpenAI-compatible endpoint. Keep paper_writer_fallback_enabled and deterministic fallback behavior in mind when interpreting output.